|

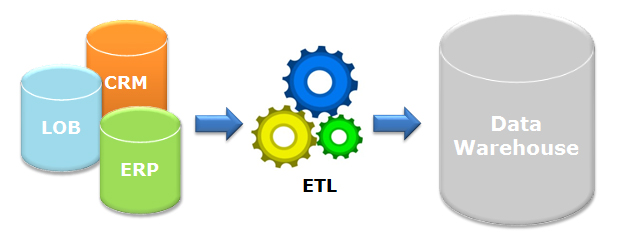

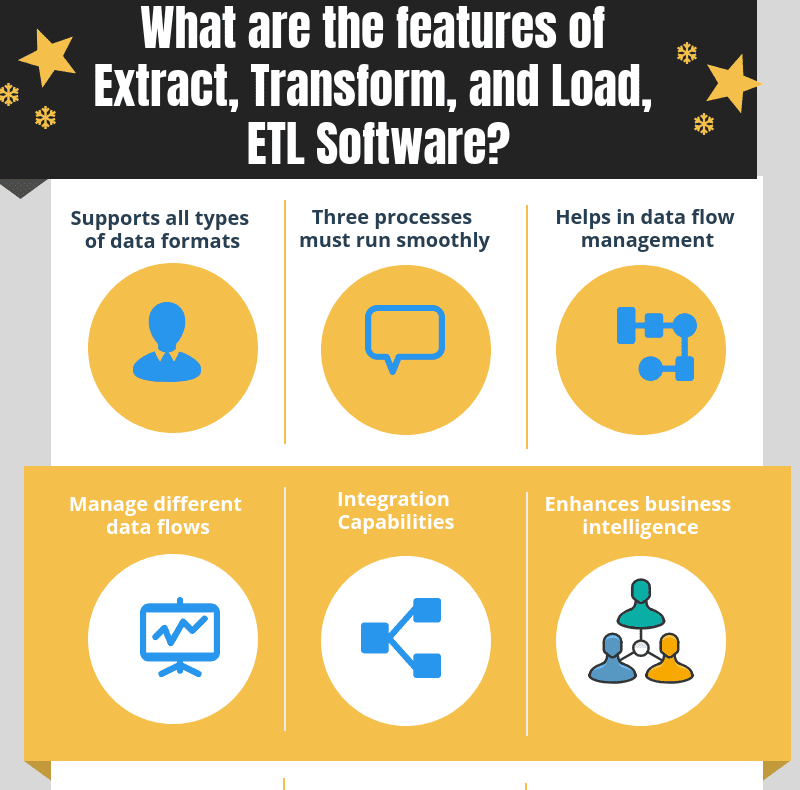

These platforms offer visual interfaces, pre-built connectors, and scalable infrastructure to facilitate data extraction, transformation, and loading tasks. Any data that fails validation can be flagged, logged, or even rejected for further investigation.Īs data volumes and complexity continue to increase, modern data integration platforms, such as Azure Data Factory, have emerged to streamline and automate the ETL process. Validation includes verifying data completeness, accuracy, consistency, conformity to business rules, and adherence to data governance policies. It is crucial to perform data validation checks, ensuring that the transformed data meet predefined quality standards. Throughout the ETL process, data quality and integrity are of utmost importance. Validation and quality assurance is required throughout the ETL process The choice of loading strategy depends on the data requirements, latency needs, and overall system architecture. Loading can be done in different ways, including full loads (replacing all existing data), incremental loads (updating or appending only new or changed records), or a combination of both.ĮTL pipelines can have different loading strategies, such as batch processing (scheduled at specific intervals) or real-time processing (triggered by events or changes in the data source). The target can be a data warehouse, a database, a data lake, or any other storage infrastructure optimized for analytics or reporting. Once the data is transformed and prepared, it is loaded into a target system or destination. Transformations may also involve complex business rules, calculations, or data validation against reference data. This phase involves applying various data transformation operations, such as data cleaning, normalization, validation, enrichment, and aggregation. TransformationĪfter data is extracted, it often requires cleansing, filtering, reformatting, and combining with other datasets to ensure consistency and quality. Depending on the source, extraction methods can range from simple data exports to complex queries or data replication techniques. This step typically requires understanding the structure, format, and accessibility of the source data. The extraction phase involves gathering data from simple or multiple sources, which can include databases, files, web services, APIs, cloud storage, and more. Let’s take a closer look at each phase of the ETL process: Extraction It involves several key steps that work together to shape raw data into valuable insights.

The ETL process serves as the foundation for data integration, ensuring that data is accurate, consistent, and usable across different systems and applications. This process ensures that data is properly prepared for analysis, reporting, or other downstream operations. However, you will get a simple scenario as an example of how to work with ETL and ADF.īefore diving into Azure Data Factory, let’s quickly recap the basic concepts of ETL.ĮTL refers to a three-step process: extracting data from various sources, transforming it into a consistent format, and loading it into a target destination. I’m afraid to say there are not any hands-on, expert scenarios.

It is important to know that this blog post gives an overview of ETL and Azure Data Factory and their proposals. I will explain what ETL is and what Azure Data Factory is. Whether you are seeking to understand the basics concepts about ETL or learn which Azure service might be used to handle this process, you are reading the right post!Īnd if you’ve never heard of ETL or ADF, no worries, it is a good opportunity to enhance your knowledge. There are great tools that achieve this and in this blog post we introduce one of the best options for you - the Azure Data Factory (ADF). ETL is a crucial process in data integration and analytics, enabling businesses to extract data from various sources, transform it into a consistent and meaningful format, and load it into a target system for analysis, reporting, or other purposes.Įnsuring that this process is respected, repeatable, and reliable is essential.

In today’s data-driven world, extracting, transforming, and loading (ETL) processes play a vital role in managing and analyzing large volumes of data. ETL and Azure Data Factory both play a key role in extracting value from your data

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed